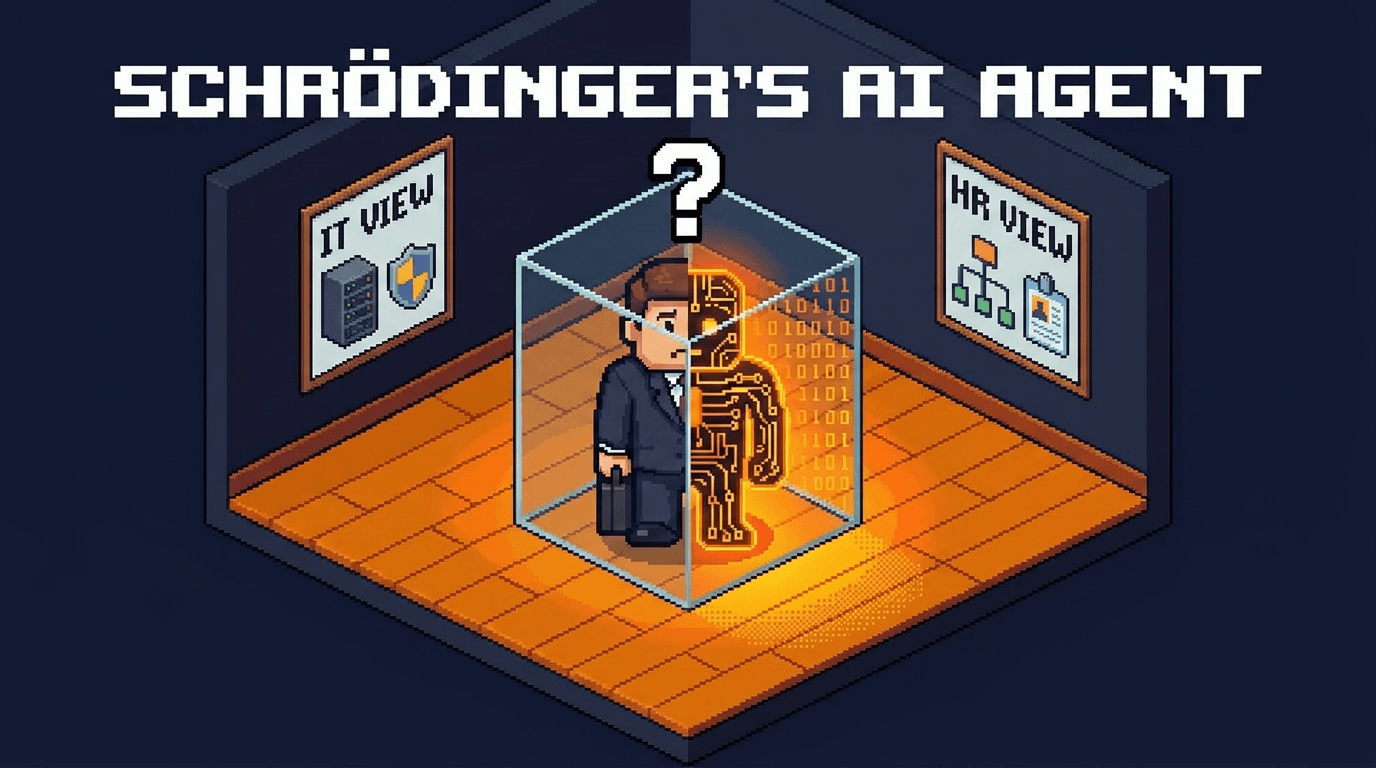

An AI agent is both code and worker. The moment you try to manage it, you have to choose. That choice will have consequences.

In 1935, Erwin Schrödinger described a thought experiment to expose a paradox at the heart of quantum mechanics. A cat sealed in a box with a radioactive atom is, until observed, simultaneously alive and dead. Both states are real. Neither resolves until you open the box.

He meant it as a reductio ad absurdum. Ninety years later, it turns out to be a remarkably precise description of how enterprises are deploying AI agents.

The Superposition Problem

When an AI agent enters your organization, it occupies two states at once.

As software, it has a version, a vendor, a deployment environment, a security posture. Your IT team can patch it, monitor it, deprecate it. It lives in your infrastructure stack alongside your SaaS tools and internal services.

As a worker, it has a role, a scope of authority, a set of tasks it performs autonomously. It makes decisions. It takes actions with downstream consequences. It operates within workflows the same way a contractor or employee would, and the outputs it produces carry real organizational weight.

An unmanaged agent is both of these things simultaneously. And for a while, that ambiguity is fine. Organizations have learned to tolerate it. The agent ships. It works. No one asks hard questions about which lens applies.

This is the superposition state. Both classifications exist. Neither has collapsed.

The Collapse

The problem arrives the moment you try to manage the agent seriously.

Management requires observation. And observation, as Schrödinger's framework predicts, forces a collapse.

When your security team audits the agent, they treat it as software. They assess it for vulnerabilities, check its access scopes, evaluate its integrations. The worker dimension disappears from view.

When your operations team tries to govern its behavior, they treat it as a worker. They ask: what is this agent authorized to do? Who approved it? What happens when it makes a mistake? The software dimension disappears from view.

Each team is working from a single-state model. Each model is incomplete. And because both collapses are happening simultaneously, in different parts of the organization, no one has a coherent picture of what the agent actually is or what it's actually doing.

Remember that the tools available to each team were built around traditional classifications: software or workers. So each team reaches for their preferred instrument of choice, and that instrument shapes what they can see.

Both Outcomes Are Deterministic

Unlike Schrödinger's cat, where the outcome is probabilistic, an AI agent does not randomly resolve into one category. It is always both. The software state and the worker state are concurrent realities that your organization must govern simultaneously.

When a team collapses an AI agent into its single point of view, they are not discovering what the agent is. Instead, they're inadvertently ignoring the other dimension.

The consequence is a deterministic governance gap that organizations won't see until something goes wrong.

An agent with clean security credentials and no workforce accountability is an unaccountable worker. An agent with a defined role and no infrastructure oversight is an unsecured system. Either gap is enough to create exposure.

A New Instrument

The Copenhagen interpretation asserts that the measurement apparatus determines what you can observe. If you use the wrong instrument, you will reach a false conclusion with no way of knowing it.

What enterprises need for AI agents is an instrument built to hold both states. One that can govern the agent as a member of the workforce and track it as a piece of infrastructure, at the same time, without forcing a probabilistic resolution that erases half the picture.

That is the premise behind Insygna. Agentic Workforce Management™ was designed around the dual nature of AI agents as a foundational constraint. The superposition is the accurate description of what agents are. Every governance decision follows from accepting that, rather than routing around it.

The question is whether your current strategy is aligned with this reality or have you already ignored the duality of the agentic workforce paradigm.