In 2024, the conversation about AI in the enterprise was largely about copilots and assistants, tools that helped humans work faster. In 2025, the conversation shifted to agents, systems that work autonomously, execute multi-step tasks, and operate inside enterprise systems without a human in the loop for every action.

We have spent the last three years debating whether AI is safe. We have convened boards, passed legislation, published frameworks, and built entire compliance practices around a single question: can we trust the model?

It is the wrong question. Or rather, it is an incomplete one. The model is not what touches your enterprise. Agents do. And agents are a different problem entirely.

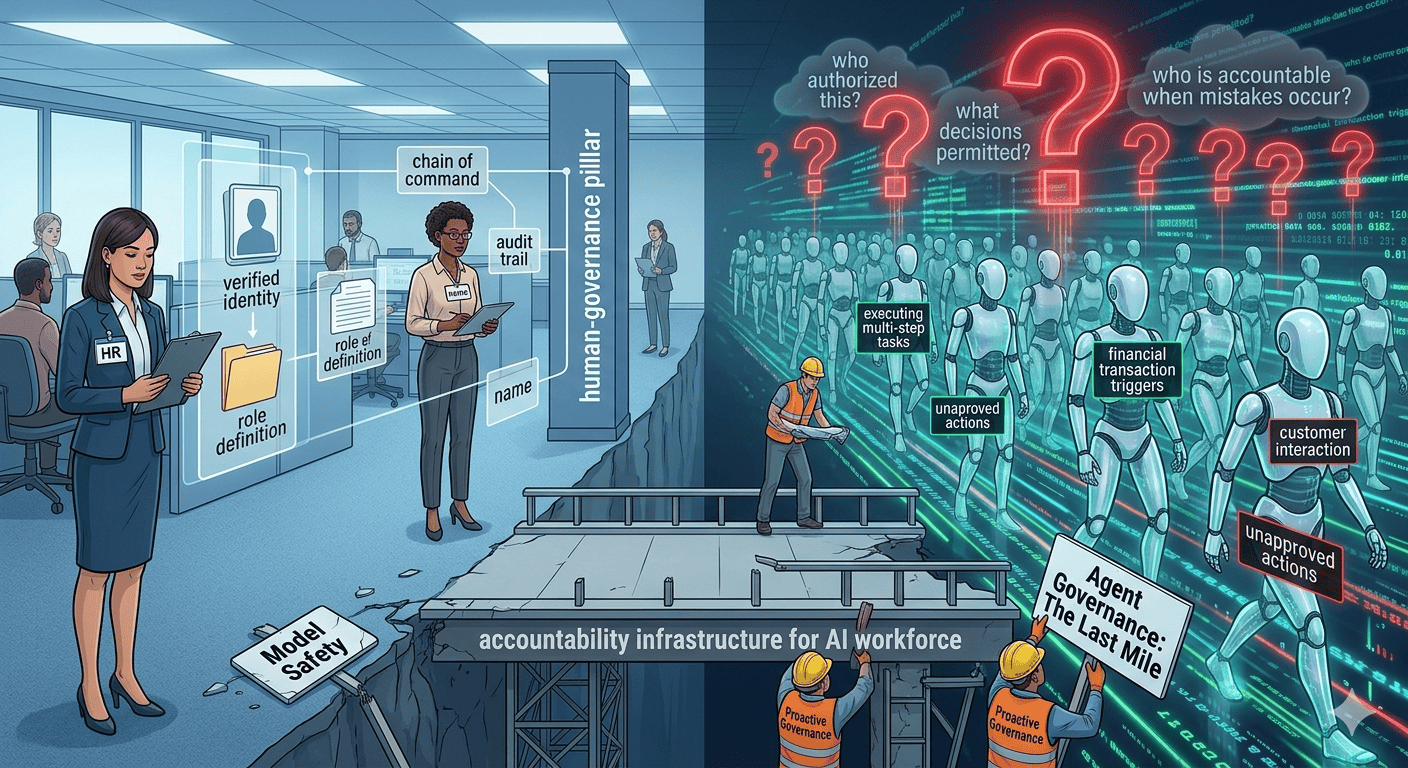

The Accountability Infrastructure We Take for Granted

Every human worker inside a regulated enterprise operates within a system most of us never think about because it works. They have a name. A verified identity. A defined role with a documented scope of authority. A background check. An employment record. A chain of command. A performance history. And when something goes wrong, when a decision is made incorrectly, when data is mishandled, when a customer interaction goes sideways, there is a system capable of answering the essential question: who did this, what were they authorized to do, and who is responsible?

That system did not emerge from good intentions. It emerged from hard experience. Decades of regulatory pressure, litigation, audit findings, and institutional failures taught enterprises that accountability infrastructure is not optional. It is the operating foundation on which everything else rests.

We built that infrastructure for human workers because human workers act on behalf of the enterprise. They touch customer data. They trigger financial transactions. They make decisions that carry legal and reputational weight.

AI agents are being developed to do similar things as human workers. And yet they exist almost entirely outside the Human Resources accountability infrastructure that companies have spent decades building.

The Workforce That Grew Faster Than Any HR Department Could Follow

In 2024, the conversation about AI in the enterprise was largely about copilots and assistants, tools that helped humans work faster. In 2025, the conversation shifted to agents, systems that work autonomously, execute multi-step tasks, and operate inside enterprise systems without a human in the loop for every action.

That shift happened quickly. Quietly. And without anyone asking the agentic AI governance question that should have been the first question asked.

- Who authorized this agent to act?

- What data is it allowed to touch?

- What decisions is it permitted to make independently?

- Who is accountable when it makes a mistake?

- How do we know it is doing what we think it is doing?

These are not technology questions. They are workforce governance questions. And for human workers, every organization already has a function responsible for answering them. It is called Human Resources. It owns onboarding, role definition, compliance validation, performance oversight, and the audit trail that regulators and risk teams depend on.

There is no equivalent for AI agents. The emerging AI workforce, which in many organizations is already larger in operational scope than the human workforce it supports, has no HR department.

The Last Mile Problem of AI Governance

The industry's current response to AI risk has focused almost exclusively on the model layer. Safety benchmarks. Alignment research. Red teaming. Responsible AI frameworks published by foundation model providers. These efforts are absolutely necessary and they matter. But they address the wrong point in the stack.

A model being safe tells you almost nothing about whether the agent deploying it is trustworthy inside your specific enterprise environment.

Think of it this way. In the early days of broadband, telecommunications companies invested enormous capital laying fiber across thousands of miles of infrastructure. The backbone was world-class. But people still had terrible internet, because no one had solved the metaphoric last mile. The connection between that backbone and the individual home remained an afterthought, and in many parts of the world this problem is still not solved.

AI governance has the same problem. We have invested heavily in the backbone: the model, the training data, the safety layer. But the last mile is the agent. The actor that actually enters your systems, executes your workflows, interacts with your customers, and makes decisions in your name. And that last mile remains almost entirely ungoverned.

What Enterprises Are Actually Deploying

Consider what an AI agent does inside a typical enterprise today.

It logs into systems using credentials. It reads and writes data across platforms that contain sensitive customer, financial, and operational information. It generates and sends communications on behalf of the organization. It initiates transactions that have real consequences. It makes decisions that in many cases trigger actions no human reviews before they happen.

Now ask: what is the identity of that agent? Not the model it runs on, but the agent itself. Does it have a verified identity that can be traced across systems? Does it have a defined and documented scope of authority? Is there a record of who created it, who approved its deployment, and what it has been authorized to do? Is there an audit trail sufficient to satisfy a regulator, an auditor, or a legal team when something goes wrong?

In most enterprises today, the answer to all of those questions is no. There is a log file. There is an API key. There is a permissions configuration someone set up during deployment. But there is no governance infrastructure. No chain of accountability. No institutional record that could survive scrutiny.

That is not a technology gap. It is an institutional gap. And it is growing larger every day as agent deployment accelerates.

The Failure Nobody Has Named Yet

When a human employee causes a compliance failure, the response is difficult but manageable. There is a name. A file. A documented chain of events. Accountability can be established and remediation can begin.

When an AI agent causes a compliance failure, and this will happen, at scale, at a regulated enterprise, in a way that becomes public, the response will be something the industry is not prepared for. There will be a log entry. There will be a model version number. There will be a vendor who points to the customer agreement. There will be a customer who points to the configuration. And there will be a regulator, a plaintiff's attorney, or a board of directors asking a simple question that nobody can answer cleanly:

Who was responsible for this agent, and how was it governed?

The enterprises that cannot answer that question will not be able to explain their way out of what follows. And the enterprises that can answer it will be the ones that treated agent governance as infrastructure, not as an afterthought.

The Function That Does Not Exist Yet

The human workforce has HR. The financial systems have treasury and controls. The technology stack has IT governance and security. Every operational function within a corporate enterprise that carries institutional risk has a corresponding governance function in place to manage it.

The AI workforce has none of these. It is the fastest-growing operational function in the modern enterprise, and it is operating without the institutional infrastructure that every analogous function has required.

That infrastructure will be built. The only question is whether enterprises will embrace it proactively, before the failure that makes it unavoidable, or reactively, after the event that forces the conversation.

The organizations that will adopt this new paradigm first will not just reduce their risk exposure; they will establish the governance model that the rest of the industry is sure to follow. History tells us that in every domain where institutional accountability has mattered, companies that adopted an accountability framework early became the leaders. The companies that waited became the cautionary tale discussed by the media and regulators.

The emerging AI workforce is real. It is operating inside your enterprise right now. It is making decisions, touching data, and acting on your behalf.

It just does not have an HR department, yet!